The treatment of epistemic logic as a branch of modal logic brings some advantages. However, there is a high price to pay. The most important objection to the modal approach is that it makes unrealistic assumptions about the reasoning power of the agents. The problem is known as the ``logical omniscience problem'' (LOP) and occurs in several forms. In its strongest form the problem can be stated as follows:

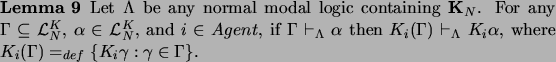

That is, whenever an agent knows all of the formulae in a set ![]() and

and ![]() follows logically from

follows logically from ![]() , then the agent also

knows

, then the agent also

knows ![]() . In particular, the agent knows all theorems (taking

. In particular, the agent knows all theorems (taking

![]() in lemma 9 to be the empty set), and he knows

all logical consequences of a sentence that he knows (taking

in lemma 9 to be the empty set), and he knows

all logical consequences of a sentence that he knows (taking ![]() to consist of a single sentence.)

to consist of a single sentence.)

Besides this strong form there are other, generally weaker forms of logical omniscience. The following are listed in [FHMV95]:

The list of questionable properties could be extended to include any

other instance of the rule (RK![]() ) (from

) (from

![]() to infer

to infer

![]() .) Moreover, the axiom

schemata (D), (4) and (5) can also be shown

to be too strong for realistic agents. In particular,

under certain circumstances axiom (5) suggests that agents

can even decide undecidable problems ([BS92],

[SW94])! In general, there seems to be no genuine epistemic

principle that may claim universal validity2.3.

.) Moreover, the axiom

schemata (D), (4) and (5) can also be shown

to be too strong for realistic agents. In particular,

under certain circumstances axiom (5) suggests that agents

can even decide undecidable problems ([BS92],

[SW94])! In general, there seems to be no genuine epistemic

principle that may claim universal validity2.3.

If epistemic logic is to be interpreted as describing actual knowledge of realistic (though idealized) agents, then the discussed closure properties require agents to be very powerful reasoners whose computational capacities cannot be achieved by real (human or artificial) agents, who are simply not logically omniscient. Logical omniscience poses a problem because it contradicts the fact that agents are limited in their reasoning powers. They are inherently resource-bounded and therefore cannot handle an unlimited amount of information. Agents may establish immediately certain logical truths or simple consequences of what they consciously assented to. However, there are highly remote dispositional states which could only be established by complex, time-consuming reasoning. The modal framework cannot distinguish between a sentence that an agent consciously assented to and a piece of potential knowledge which could never be made actual by the agent and is therefore not suited to model resource-bounded reasoning2.4.